What Is Query Fan-Out in AI Search (And Why Keyword Lists Are Not Enough)

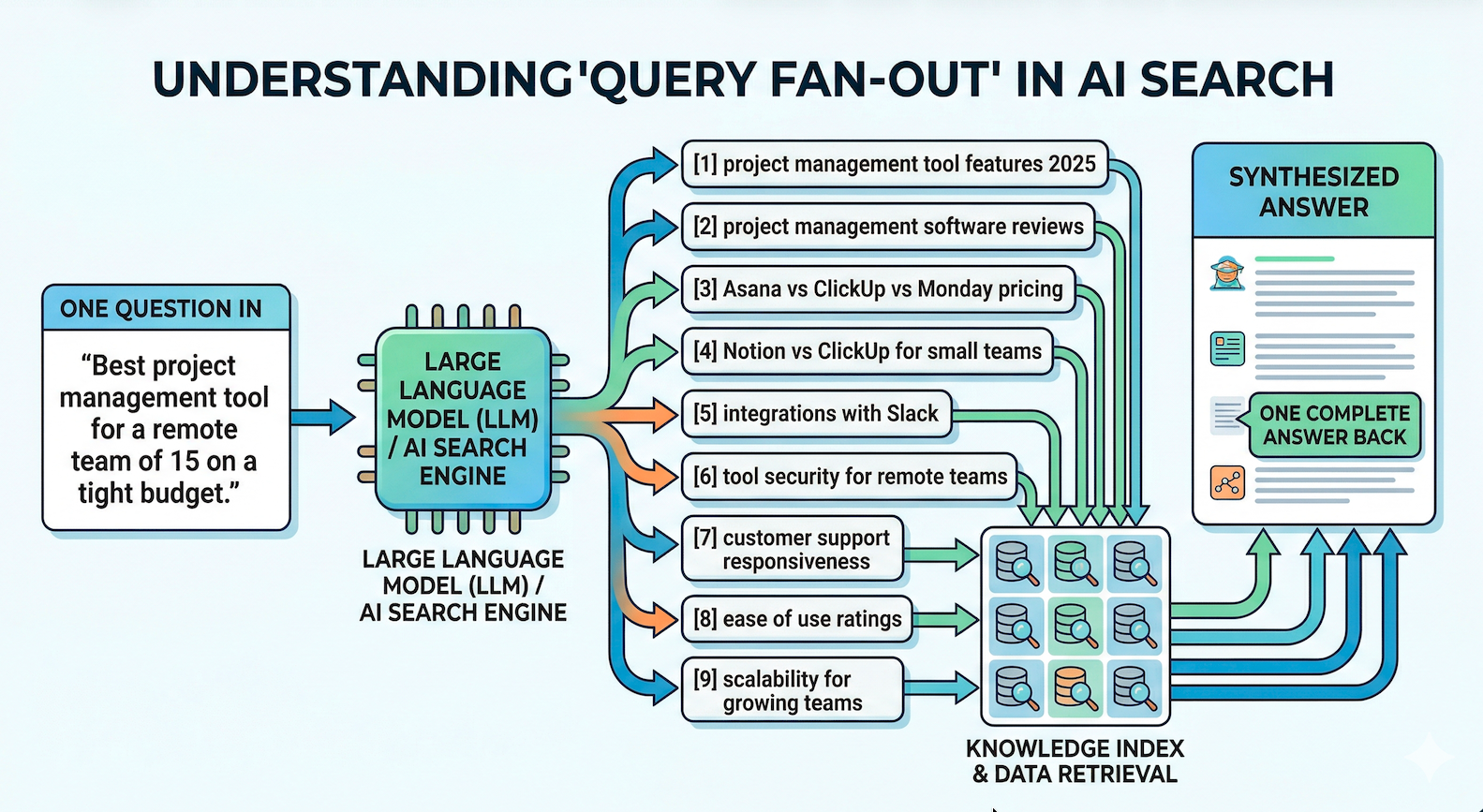

One prompt in. Nine searches out. One answer back. Here's what it means for your content.

One Question. Nine Searches. The Fan-out

Last week I said AI search isn't a completely new skill set. Same problem as 1998, new surface.

This week we go one layer deeper. Into the thing that actually breaks how most marketers still think about search.

It's called query fan-out. And once you understand it, it unlocks completely new thinking of how to approach AI search.

Your keyword list is lying to you

You have a list. Maybe 100 terms. Maybe 500. You rank for them in Google. Some even bring decent traffic. You check your rank tracker every Monday like a good little marketer.

But your LLM visibility basically weak.

When you actually open ChatGPT and ask a question your customers would ask, your brand or product doesn't show up.

Why?

Because the AI isn't searching for the thing you think it's searching for.

When someone types "best project management tool for a remote team of 15 on a tight budget" into ChatGPT or Google AI Mode, the model doesn't run that query. It runs a bunch of smaller ones you never tracked. Then stitches the answers together.

That's fan-out.

- One question or prompt in.

- LLM breaks it down into many searches

- And One answer back.

What it actually looks like

Nective ran an analysis of about 8,000 prompts across Google's Gemini 3 API and ChatGPT's search behavior. Chris Long, their co-founder, presented the results on a recent AirOps webinar.

Here's what happens when someone asks something like "best project management tool for a remote team." The system fans out into a tree of smaller queries. Roughly like this:

- project management tool features 2025

- project management software reviews

- best project management tool for remote teams

- Asana vs ClickUp vs Monday pricing

- free plan comparison

- Notion vs ClickUp for small teams

- integrations with Slack

- Trello alternatives for remote work

- tools with time tracking

(Tip: Later I'm going to show you how to make fan-outs visible)

With the project management tool example you saw what a fan out can look like.

Nine searches. For one question.

And that's Google being average. Software came out as one of the most fan-out heavy verticals in the study, averaging around 12 sub-queries per prompt. Local businesses only triggered about 4. ChatGPT is much lighter, averaging about 2, maxing out around 4.

Now here is the part that should make you sit up.

A separate study Chris referenced on the same webinar looked at roughly 500 to 1,000 prompts. They ran all the fan-out queries through a traditional SEO tool. Result: about 95% of those queries had zero search volume.

Not in Ahrefs. Not in Semrush. Or any other tool

The queries AI is actually using to find your brand are queries you've never researched, never tracked, and probably never written content for.

That list you check every Monday might have almost nothing to do with how AI is actually searching for your category.

Pretty amazing.

Why this breaks old SEO brain

Old SEO brain: "I want to rank for best project management tool. So I write a 2,500 word article, optimize the H1, add some H2s, stuff in internal links. Done."

AI search brain: "For my page to even get considered, it has to answer a tree of 9 to 12 sub-questions at once. Features. Pricing. Remote fit. Integrations. Free plans. Comparisons. Use cases. And each answer has to be extractable in isolation."

Google used to reward one great page per keyword. LLMs reward answer completeness across a whole cluster.

Answer 3 of 9 fan-outs → you get cited sometimes. Answer 8 of 9 → you become the default source. Answer 9 of 9 plus 3 adjacent questions the model didn't even ask → you start showing up in answers for questions you never targeted.

This is why "topical authority" is the way to go.

The entity layer underneath

One more thing, because it's the mental model that important to understand.

LLMs DON'T really think in keywords. They think in entities and attributes.

"Project management software" is an entity. "Price, integrations, free plan, best for remote teams" are all attributes.

When the model fans out, it's not generating random questions. It's walking down a list of known attributes for that entity and running one search per attribute. Software gets 12 fan-outs because it has a lot of attributes. A pizza restaurant gets 4 because it doesn't.

So you might already think that your job isn't really "write content that answers the question."

And you are kinda right.

Your job is to make sure every important attribute for your entity is clearly stated, structured, and extractable on your page.

- Define the entity.

- List the attributes.

- Answer each one in a form the model can lift cleanly.

That's how it works

What to actually do

Three moves. In order.

1. Discover your fan-outs.

You can't see the actual fan outs but here are three ways the get your really close to reality without using an AI Search tool

1. Force a pseudo fan-out

You can prompt it like:

"Break this into sub-questions and answer each separately before combining"

2. Ask for the search/process explicitly

Example:

"What sub-queries would you generate to answer this?"

You'll get something like:

- Sub-question 1

- Sub-question 2

- Sub-question 3

3. And my favorite and most detailed prompt:

Act like an AI search engine. Break this query into every facet, sub-intent, comparison, and long-tail variant you'd need to retrieve content for. Return a hierarchical tree.

You get about 85 to 95% of what the real system generates.

So you have to pick 10 to 20 prompts that matter for your business. Extract the fan-outs like shown above.

That's your real AI keyword list.

2. Map your content against the tree.

For each fan-out, ask: do we already have content that answers this?

If yes, can the AI actually reach and extract from it?

It's a kind of gap analysis. Most teams find they already cover 40 to 60% of the tree. They just never realized it. The other half is your content roadmap for the next quarter.

3. Restructure pages around attribute coverage, not keyword density.

A page targeting "best project management tool for remote teams" should have a clear section for every major attribute. Pricing. Features. Integrations. Free plan. Comparisons. Use cases.

Each section self-contained. Each answer short enough to lift as a clean chunk.

And don't forget.

You are not writing for a human who scrolls. You are writing for a retrieval system that samples your page in chunks and decides which ones are worth quoting.

The bottom line

Fan-out is the single biggest reason traditional keyword tracking is breaking.

You are not competing for one query anymore. You are competing for a tree of 5 to 15 sub-queries, most of which have zero search volume in any tool you currently use.

So to stay relevant in AI search you have to figure this out.

Up next

Next email: how to actually structure a page so LLMs can extract your answers cleanly. This is where we get into implicit schema, which is probably the most underrated concept in AEO right now.

You don't need JSON-LD to speak the language machines read. You just need to write in a way the model already understands.

Talk soon.

End of Lesson 2

This Week's Signals

Four moves this week. All pointing the same direction: AI search is now infrastructure.

Google AI Mode is in Search Console. Impressions and clicks now live in the GSC Performance report. Gemini 3 powers AI Overviews globally. Google also dropped "preferred sources" docs. Baseline your AI Mode data this week.

Human content still wins 8 to 1. Semrush analyzed 42,000 posts. Humans hit Position 1 80% of the time. Pure AI: 9%. A 16-month study widened the gap to 31 points. The cause: AI-only content earns 61% fewer editorial backlinks. AI drafts with real human editing closed the gap to within 4%. Google isn't detecting AI text. It's detecting missing editorial judgment.

1.08% AI traffic. 44% of B2B brands invisible. Conductor analyzed 3.3 billion sessions across 13,770 domains. ChatGPT drives 87.4% of AI referrals. AI-referred visitors convert at 2x organic in 1/3 the sessions. Separately, DerivateX scored 50 B2B SaaS companies. 44% below 50/100. Clio at 89, LeadSquared at 2. Same competitive set. The 44% is the number that should scare you.

The AEO tool market grew 2,000% in 10 months. G2 went from 7 tools to 150+. $31M in VC. $337/month average. But the measurement layer is shaky. Superlines tracked AI visibility dropping 35% in 4 weeks. Perplexity and ChatGPT scored the same brand 14.8x differently on sentiment. Track at least two engines. Monitor weekly. Baseline before anything.

Tool Spotlight: Pendium. Launched March 30. Tracks which sources AI cites when answering your buyer persona's questions, not just broad prompt sets. The gap between "we're cited" and "we're cited when our buyer is asking." Worth a trial. Find it in the directory.

The takeaway: Google's GSC integration is the line in the sand. Baseline now. Monitor weekly. Prioritize structured, citable content. The teams who move this quarter will have the data advantage when AI referral traffic doubles. And the Conductor data suggests it will.

Sources

Google Gemini 3 + GSC AI Mode

Google Blog: AI Mode & AI Overviews update

Google Blog: Gemini 3 Flash in AI Mode

Google Search Central: Latest updates

Google Search Central: AI features documentation

Human vs AI Content

Search Engine Land: Human content ranks higher than AI study

Digital Applied: 16-month AI vs human ranking study

Conductor + DerivateX Benchmarks

Conductor 2026 AEO/GEO Benchmarks Report

Business Wire: Conductor report announcement

DerivateX B2B SaaS invisibility study

AEO Tool Market + Volatility

G2: Inside the 2,000% growth of the AEO category

G2: Answer Engine Optimization category

Superlines: AI search statistics

Averi.ai: ChatGPT vs Perplexity vs Google AI Mode B2B SaaS benchmarks