Why the AI Cited Your Competitor (And What They Did to Earn It)

How Answer Engine Optimization (AEO) replaces backlinks with consensus, and the earned media strategy you need to rank in AI search.

We have already arrived at part 4 of our email course.

Last week we covered implicit schema and a checklist to make your pages extractable.

This week we zoom out and it is all about citations, consensus, RAG and what to do to get cited more often by AI.

You can have the best-structured page in your category and still not get cited.

Why?

Because structure only solves half the problem. The other half is authority. And in AI search, authority works differently than you think.

Backlinks are not citations

In SEO, authority means backlinks. A link from the New York Times homepage is a big win. It usually lifts your whole domain.

In AEO it is different and that link might do almost nothing for you.

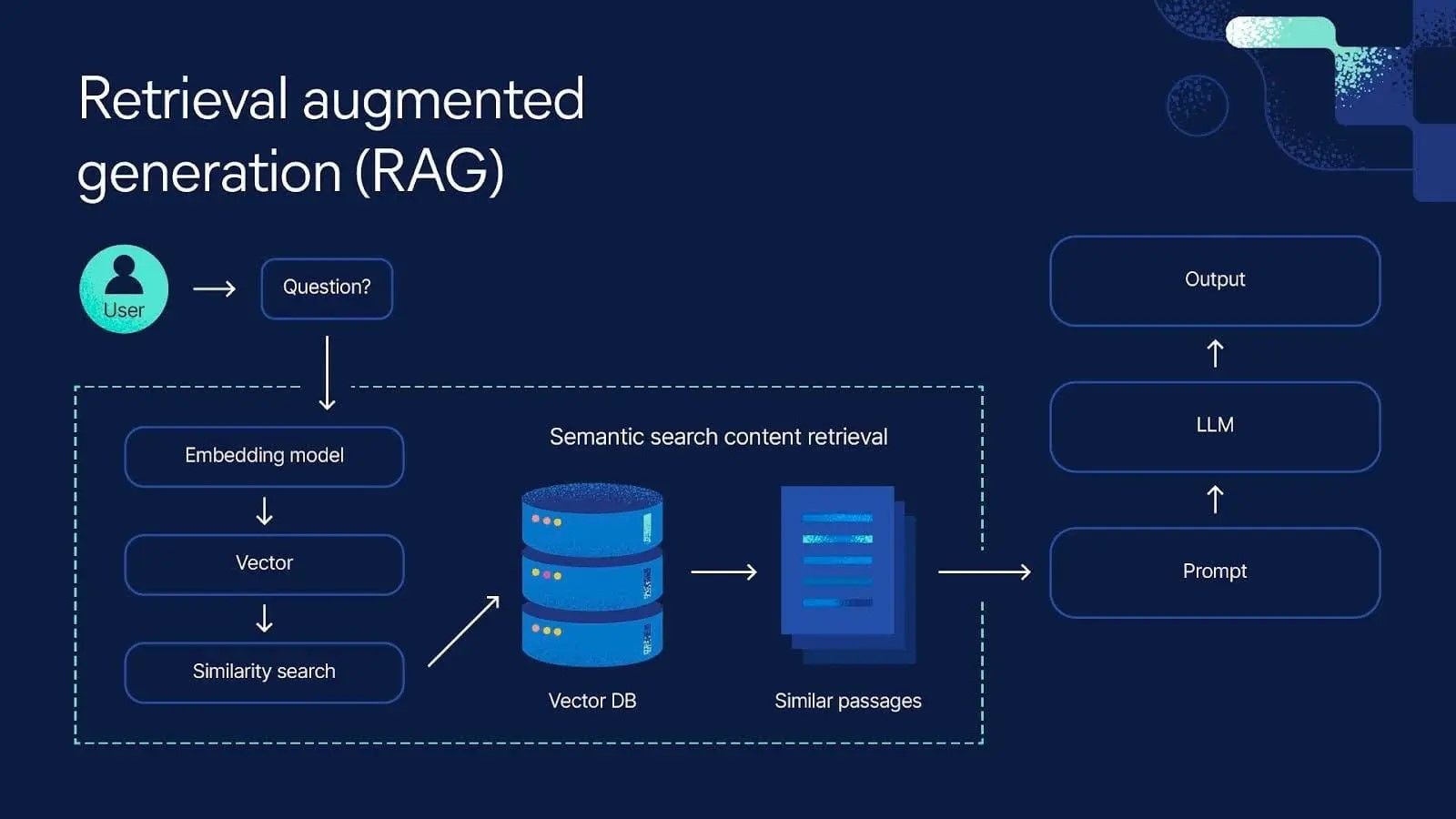

When an LLM builds an answer, it doesn't check your backlink profile. It uses Retrieval-Augmented Generation (RAG). It runs a search (remember fan-out from Email 2), pulls the top results, and then looks at what those retrieved documents say about you.

In case you wonder what RAG exactly means (I did):

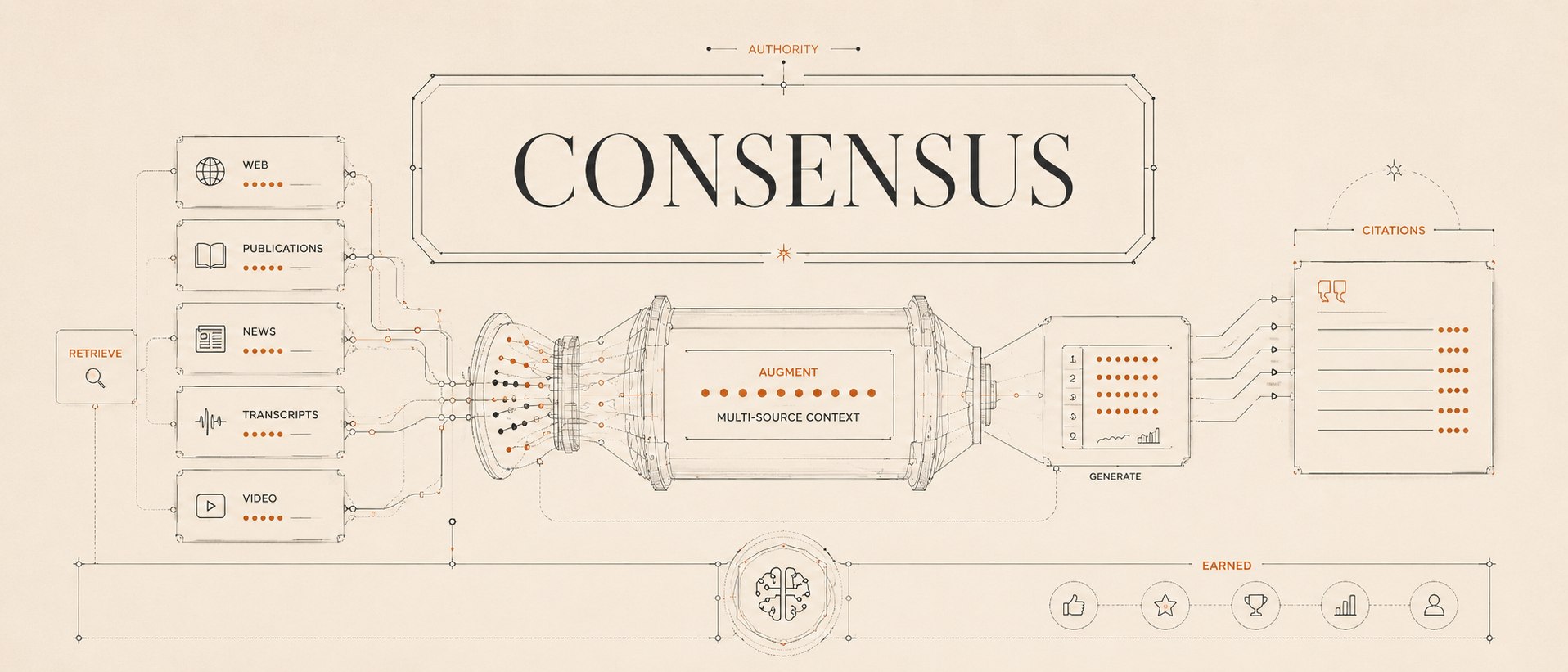

- Retrieve: The system finds the documents (your "search" step).

- Augment: It "glues" those documents to the user's question.

- Generate: The model writes the answer using that "glued" context.

The model is not asking "does this site have authority?" It's asking "do multiple sources in my search results agree that this brand is the answer?"

That's a fundamentally different game.

In SEO, one link from the New York Times moves the needle. In AEO, what matters is being mentioned by as many of the specific URLs in the citation set as possible. Not who links to you. Who mentions you.

The credit card example

This is the example that made it click for me. It is from an article of Graphite.io.

Ask ChatGPT: "What is the best credit card?"

The AI pulls citations from Forbes, NerdWallet, US News, and a few others. Then it checks: which brands appear across those sources?

- Ramp appears in Forbes. In NerdWallet. In US News. Three out of three.

- Brex appears in Forbes and US News. But not NerdWallet. Two out of three.

- Mercury? Only US News. One out of three.

Guess which brand gets recommended most often?

Yes you’ve guessed it.

Ramp.

Ramp has the best backlink profile but that’s not the reason. Ramp's page is better structured but this is also not the reason.

It is because when the model triangulates across its citation sources, Ramp shows up everywhere. The model understands Ramp’s importance.

And this is what drives recommendations.

Mercury could have the best product page in the world. Perfect implicit schema, beautiful tables, clear Q&A blocks.

But unfortunately it doesn't matter if the model only finds Mercury in one out of three citation sources. The model doesn't trust a single source. It trusts patterns across sources.

That's the difference between owned and earned in AEO.

Owned vs earned: the framework that changes everything

This is probably the most important strategic distinction in AEO right now.

- Owned = your website. Your content. Your pages. The stuff you control directly.

- Earned = mentions of your brand on third-party sites. Reviews, Reddit threads, listicles, YouTube videos, editorial coverage, forum posts.

In traditional SEO, owned does most of the heavy lifting. You rank your own pages for your own keywords.

In AEO, it depends on the type of query.

For head terms (broad, competitive queries like "best credit card" or "best project management tool"), the citation sources are almost always publishers: Forbes, NerdWallet, G2, Wirecutter, Reddit. Product companies rarely appear directly. To win head terms, you need an earned strategy. You need those publishers to mention you.

For tail terms (specific, long queries like "best project management tool for freelancers who need Slack integration"), product companies appear much more often. The citations are your own pages, your competitors' pages, and maybe a review site or two. To win tail terms, you need an owned strategy. You need the right content on your site and structure it well.

- The more general the question → the more earned matters.

- The more specific the question → the more owned matters.

(By the way LLMs love specific new information such as own research, data etc and not just generic summaries.)

Most teams only do owned. They write great content on their site and hope the AI picks it up.

This mainly works for the tail and not so much for the head. And the head is where the volume is.

Where the AI actually looks

So where do you need to show up?

Graphite's analysis of Webflow's AEO program found the following:

- Reddit appeared in 10% of ChatGPT citations.

- YouTube appeared in 5% of ChatGPT responses.

- YouTube appeared 32% of Perplexity responses.

And according to Profound's analysis, listicles and comparison articles account for roughly 33% of all AI citations.

These are the "best X for Y" articles on publisher sites.

But I have a gut feeling that this won’t work forever because many jumped on the train of creating such kind of articles and the AI no longer gains any new information. It becomes "slop” and will get cited less in the future.

And here is another one that surprised me:

Profound's research discovered that a relatively unknown site called eatthis.com ranked far above Forbes in the fast-food category for AI citations. Just because it had better-structured, more specific content in the exact category the model was searching.

The model doesn't care about your domain rating. It cares about whether you show up in the right places for the right queries with the right information.

But what to do now with all of this information and how do you actually build earned presence?

The earned citation playbook with three tiers

Tier 1: Third-party review platforms (fastest impact) G2, Capterra, TrustPilot, industry-specific directories. These platforms are cited constantly by LLMs because they aggregate reviews and comparisons in a structured, extractable format. Make sure your profiles are complete, your reviews are current, and your product information is accurate. If you are in SaaS, G2 alone can shift your citation probability significantly.

Tier 2: Reddit, YouTube, and forums (medium effort, high payoff) Reddit threads persist for years and LLMs cite them heavily. (There is a reason Google recently paid $60M for real-time access to Reddit's data API…). If your product is discussed positively on relevant subreddits, that thread becomes a citation source every time someone asks a related question.

YouTube works the same way. LLMs transcribe video content and use it for entity understanding. A well-titled YouTube review of your product becomes a citation source for the model.

The key with both: you can't fake this. Authentic participation and genuine reviews outperform anything promotional. Reddit communities especially will punish anything that smells like marketing.

Tier 3: Editorial and publisher coverage (highest effort, longest-lasting impact) Getting mentioned in a Wirecutter review, a Forbes listicle, or an industry publication is the hardest play but also the most durable. These are the sources LLMs weight most heavily for head terms.

(This perfectly aligns with Google's classic Search Quality Rater Guidelines, which dictate that your external reputation matters far more than what you say about yourself).

The approach: structured access (send products for review, offer data for articles, pitch expert commentary), not paid placements. The AI often extracts value from editorial content longer because traditional sponsored content usually lacks the deep, objective comparisons that LLMs are engineered to pull from.

How to figure out your citation gap

This is the tactical move you can do immediately:

- Pick your most important head query.

- Look at the sources cited in the answer. Write them down and ask: does our brand appear in those sources?

- If yes: you are in the game. Optimize from there.

- If no: that's your gap. Those sources are where you need to show up. Not on your own site. On theirs.

Then do the same for 5-10 more queries across head and tail. You'll start to see a pattern: the same third-party sources come up again and again for your category.

That's your citation map and where your earned strategy should focus.

The bottom line

Your site is only half the equation.

The AI doesn't trust any single source. It trusts consensus across multiple sources. If your competitor appears in Forbes, Reddit, G2, and a YouTube review, and you only appear on your own website, the model will recommend them. Not because their content is better. Because their presence is wider.

Owned gets you the tail. Earned gets you the head. You need both.

Up next

Next email course: your content has a shelf life, and it's shorter than you think. We'll get into freshness decay, how fast citations expire, and the refresh cadence that keeps you visible.

Talk soon.

This Week’s Signals: AEO is Moving Out of the Lab

The AEO measurement gap is closing fast. This week delivered the clearest proof yet that we’ve hit a threshold: "Does AEO work?" is no longer the question. The question is now "How do I measure it, benchmark it, and report it upstream?"

What Moved This Week

1. Bing Previews "Citation Share" — The First Native Benchmarking Tool At SEO Week NYC, Microsoft previewed Citation Share for Bing Webmaster Tools. This is a landmark moment. It’s the first native tool from a major engine that shows you exactly what percentage of citations you capture for a query relative to your competitors.

- The Signal: Just as keyword rank tracking became the standard KPI for SEO, Citation Share is about to become the standard for AEO.

- Action: If you aren't on Bing Webmaster Tools yet, get there. Their existing AI Performance Dashboard is already the best "keyword research" tool we have for AI search.

2. The Death of the "Markdown Mirror" (1M+ Citation Study) A new study from OtterlyAI analyzed 1M+ citations and found a glaring data point: AI engines cite HTML pages almost exclusively. Markdown (.md) files were not cited once.

- The Signal: The advice to publish "Markdown mirrors" for LLMs is officially a myth.

- Action: Stop wasting editorial time on .md mirrors. Consolidate your effort on your primary, well-structured HTML domain. That is where GPT-5.4 and others are actually looking.

3. The Four Variables That Rank You Within the Answer It’s not just about being cited; it’s about where you are cited. New analysis shows that Mention Order is king—up to 74% of users act on the first source mentioned in an AI response.

- The Signal: The models prioritize sources that use "Authority Framing" (e.g., "industry standard," "established methodology") over hedged or tentative language.

- Action: Audit your top 10 queries. If you’re cited 4th or 5th in a list, you're losing the click. Rewrite your content with more confident, definitive framing to move up the "in-answer" rank.

4. The Winning Formats: FAQs and Comparisons Research data from 2026 shows a clear hierarchy in what gets cited. FAQ pages, definitional content, and comparison pages are generating the highest citation rates.

- The Signal: These formats have the lowest "interpretive overhead" for the AI.

- Action: Audit your content gaps. Most B2B teams are missing Entity Pages (authoritative references for people/products) and Comparison Pages. Fill those gaps before you write your next blog post.

Tool Spotlight: Profound($35M Series B)

Profound just closed a major funding round, signaling that enterprise-grade AEO measurement is now a durable budget line. They are tracking citations across 10+ engines with 400M+ prompt insights.

- Why it matters: When institutional capital moves this big, it means AEO is no longer "experimental." It's an enterprise software category. You can track the full landscape of these tools at The AI Search Directory.

The Takeaway

We are crossing the threshold from "best guesses" to "measurable discipline." Between Bing’s native tools and the OtterlyAI data, the rules are being written in real-time.

Your move this week: Pick your most important head query, run it in ChatGPT or Perplexity, and look at the Mention Order. If you aren't first, look at the language the first-place source used.

Source Email Cours Part 3

- AEO Basics by Ethan Smith/Graphite: For the Owned vs. Earned strategic framework. Listen to the discussion here.

- Graphite/Webflow Case Study: For the specific Reddit/YouTube citation data and the importance of tracking signals. Read the full breakdown on Webflow's Blog.

- Profound Marketer's Playbook: For the eatthis.com discovery and the 33% listicle statistic. Read Profound's 2025 AEO Guide here.

- RAG (Retrieval-Augmented Generation) Architecture: Standard LLM mechanics demonstrating how AI triangulates multiple retrieved documents to form a consensus. Read IBM's explainer on RAG here.

- Google / Reddit Data API Partnership (2024): Highlighting the $60M deal that proves search engines and LLMs are actively prioritizing forum-based consensus data for training and citations. Read more on the deal's implications.

Signal Sources & Further Reading

- Bing AEO Dashboard: Official Bing Webmaster Blog Announcement

- OtterlyAI Study: 1M+ Citation Analysis 2026

- Profound $35M Series B: Enterprise AEO Maturation Report

- Search Engine Land: The Four Variables of AI Ranking

- AEO Content Formats: SEJ Webinar: 2026 Citation Data